I’ve spent the last three years living inside Large Language Models (LLMs). I’ve used them to code entire applications, write complex strategies, and brainstorm creative hooks. But recently, I noticed something alarming: I was starting to forget how to think from first principles.

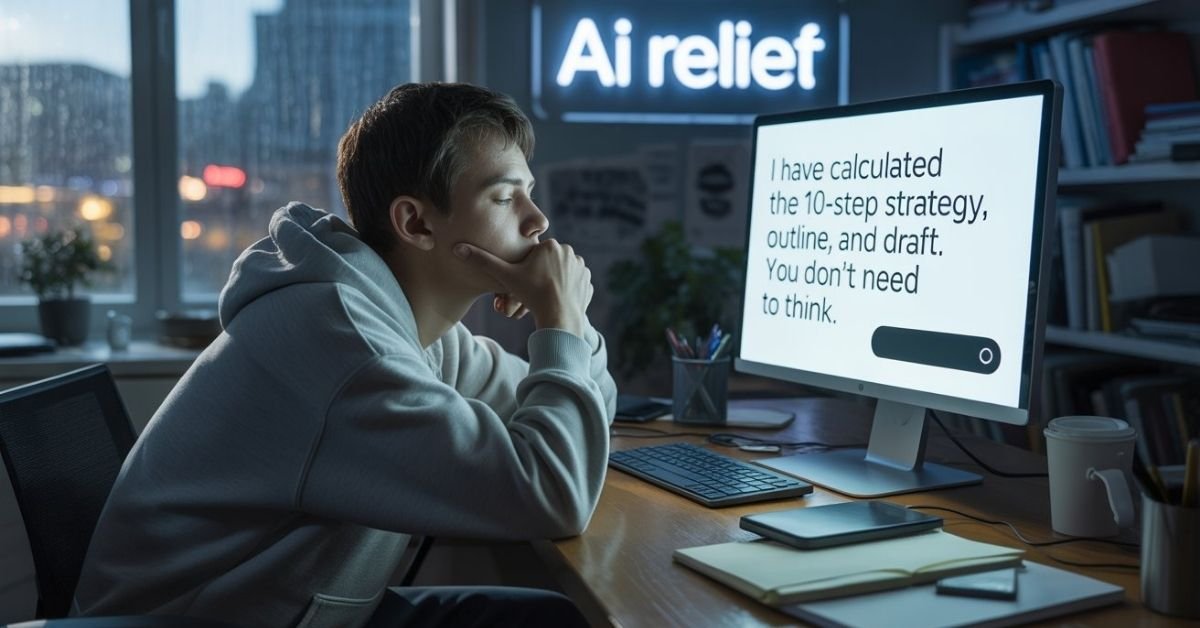

AI Laziness isn’t just about doing less work; it’s about cognitive offloading the silent process where your brain stops building the “muscles” it needs to solve problems because a chatbot is doing the heavy lifting. If you’re an AI user seeker, you’re not just looking for a shortcut; you’re looking for a superpower.

But there is a fine line between a superpower and a crutch. This post is your roadmap to ensuring that AI remains your tool, not your replacement.

The Science of Cognitive Offloading

To understand why we get “lazy,” we have to look at how the brain works. Our brains are biologically optimized for the path of least resistance. If a machine can provide an answer in 2 seconds that would take us 20 minutes of deep thought, the brain will naturally choose the path of least resistance.

The Feedback Loop of Atrophy

When we outsource our thinking, we stop engaging in neural plasticity. Recent studies from MIT’s Media Lab have shown that users who rely on AI for first drafts show significantly lower neural engagement in the prefrontal cortex.

I experienced this firsthand. Six months into using ChatGPT for everything, I realized I couldn’t structure a complex argument on a whiteboard without a prompt. I had fallen into the trap of metacognitive laziness. I was getting the “result” (the output) but losing the “process” (the critical thinking).

Watch: The Proof Behind AI Laziness

Before we dive into the solutions, watch this insightful video by Chris Sean that explains a groundbreaking MIT study. It reveals how using AI can actually cause parts of our brain to “shut off” during the creative process.

Identifying the 3 Pillars of AI Laziness

Before we fix the problem, we must diagnose it. In my research, I’ve identified three distinct ways AI users lose their edge:

1. Prompt Dependency

This is the inability to start a task without a digital nudge. If you find yourself staring at a blank screen, unable to type a single sentence until you’ve asked an AI for an “outline,” you have prompt dependency. Your creative “starter motor” is stalling.

2. Surface-Level Verification (The “Good Enough” Trap)

This occurs when a user accepts AI output without deep-diving into the logic. Because the AI writes with such confidence, we assume the facts and the reasoning are sound. This leads to a decline in intellectual agility the ability to spot nuances and errors in complex systems.

3. Skill Atrophy

Think of this as the “GPS Effect.” Just as many people can no longer navigate their own cities without Google Maps, professionals are losing foundational skills like coding logic, grammatical structure, or financial modeling because they “just let the AI do it.”

The “Active Co-Pilot” Framework

To stay mathematically and contextually superior to the average user, you must change your relationship with the machine. Use these three tactical pillars to stay sharp.

Pillar I: The “Think-First” Protocol

Never start with a prompt. I make it a strict rule to spend at least 5 to 10 minutes sketching out my own logic, goals, and constraints on paper (or a digital notepad) before I touch the keyboard.

- Action: Define your own “Internal Prompt” first.

- Result: This ensures the “architectural” part of your brain stays active.

Pillar II: Moving AI to the “Review Phase”

The biggest mistake is using AI for the “Drafting Phase.” Instead, write your messy, imperfect first draft yourself. Then, bring in the AI as a High-Level Editor.

- The Prompt to Use: “Here is my draft. Identify the logical gaps, challenge my assumptions, and suggest three ways this could be more concise.”

- Why it works: This keeps you in the driver’s seat while using the AI to refine your existing thoughts.

Pillar III: The “Inversion” Method for Learning

If you use AI to solve a complex problem like a coding bug or a tax calculation don’t just copy-paste the answer.

- The Technique: Ask the AI: “Explain the step-by-step logic behind this solution as if I have to teach it to a class.” * The Final Step: Close the AI window and try to rewrite the solution from scratch. If you can’t do it, you haven’t learned it; you’ve only borrowed it.

Modern Intellectual Agility

In the age of prompt engineering and feedback loops, the most valuable skill isn’t knowing the answer it’s knowing how to validate it. To avoid skill atrophy, you must treat every AI interaction as a training session.

We are seeing a shift in the job market. Companies no longer just want “AI users”; they want “AI Architects” who understand neural engagement and can direct the machine without being led by it.

Conclusion

AI is the most powerful bicycle for the mind ever created. But if you stop pedaling, you’ll eventually fall off. By implementing the Active Co-Pilot Framework, you ensure that your cognitive muscles stay strong, your creativity remains authentic, and your output stays superior to those who have surrendered their thinking to the algorithm.

Call to Action (CTA):

Don’t let your cognitive muscles waste away. Download my “Active Co-Pilot” Checklist below to audit your AI usage and ensure you’re getting sharper, not lazier.

[ Download the Checklist Now ]

FAQs)

AI can increase behavioral laziness if used as a replacement for effort, but it acts as a productivity multiplier when used to automate repetitive, low-value tasks

ChatGPT promotes “metacognitive laziness” when users copy-paste answers without thinking, but it fosters creativity for those who use it as a brainstorming partner.

Yes, AI fatigue occurs when users feel overwhelmed by the constant stream of AI-generated content and the mental pressure to keep up with rapid technological shifts.

AI isn’t a “sloth” itself, but it can create a “sloth-like” dependency in users who stop exercising their critical thinking and problem-solving muscles.

Stephen Hawking warned that full AI could spell the end of the human race if it eventually supersedes human intelligence and starts redesigning itself.

It depends on the user: you become lazier if you outsource your thinking, but smarter if you use AI to learn complex topics faster and deeper.

“…When the brain is on ‘cruise control,’ we often fail to spot when the machine is confidently giving us wrong information. To understand why this happens and how to catch it, check out our deep dive below.”

👉 Read Next: The Truth About AI Hallucinations: Why Chatbots Make Things Up

Tech Troubleshooting Expert and Lead Editor at TechCrashFix.com. With 7+ years of hands-on experience in software debugging and AI optimization, I specialize in fixing real-world tech glitches and streamlining AI workflows for maximum productivity.