BLUF: In the 2026 search landscape, the False Positive Content Error stands as the biggest hurdle for high-level creators. Detectors like Originality.ai (v3.0) and GPTZero calculate statistical probability; they don’t hunt for truth. To protect your reputation, you must master Adversarial Stylometry the art of writing in a way that remains unpredictable to a machine but crystal clear to a human.

The Crisis of the “Clean Writer”

Why do the most talented professional writers trigger the False Positive Content Error so often? The answer lies in the “Standardization of Language.”

Professional styles especially in B2B SaaS, Legal, and Medical niches prioritize clarity and brevity. Ironically, LLMs follow these exact same parameters. When you write “too perfectly,” you strip away the “Linguistic Noise” that signifies a human creator.

The Evolution of Detection in 2026

Early detectors relied on simple synonyms, but 2026 models use Semantic Vector Analysis. They measure the distance between ideas. If your logic follows a “statistically perfect” path, the system flags you.

Why do AI detectors trigger a False Positive Content Error?

To fix the problem, we must dissect the math. Modern Linguistic Fingerprinting judges your work using a dual-engine approach:

- The Perplexity Metric (The “Surprise” Factor): AI models like GPT-5 or Claude 4 function as predictive engines.

- Low Perplexity (AI Flag): “The CEO decided to implement a new strategy to increase revenue.” The AI predicted every single word here.

- High Perplexity (Human Shield): “The CEO pivoted, tossing the old playbook into the shredder to hunt for ‘black swan’ revenue streams.” This phrasing surprises the AI.

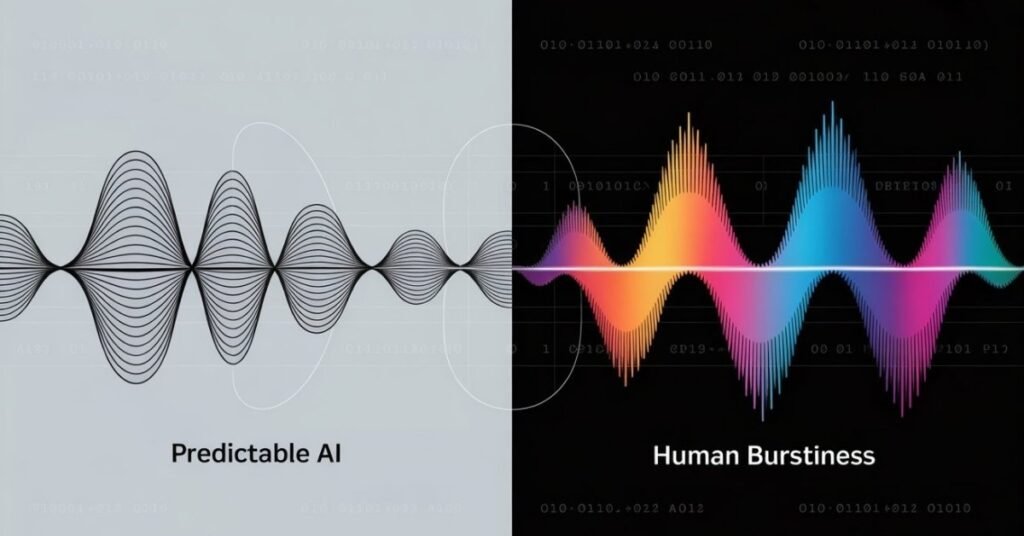

- The Burstiness Metric (Structural Rhythm): Humans naturally write with inconsistency. We change our rhythm based on our moods. This shows up as Burstiness the variation in sentence length.

- The Machine Flaw: AI produces a “flatline” rhythm. Even with good sentences, the length usually stays within a narrow 10-word margin. This lack of variance triggers the error.

If you want to see these AI detection traps in action and learn how to bypass them visually, watch this deep dive:

Step-by-Step Guide to Fixing the Error

I developed this Human-in-the-loop (HITL) workflow to inject “Human Noise” into your work without losing quality.

Step 1: Inject “Neural Entropy”

Neural Entropy involves using non-linear thought patterns.

- Action: Create your own analogies instead of using standard industry cliches.

- Example: Don’t say “scaling a business”; say “overclocking the company’s cultural engine.” AI struggles to map these unique metaphors authentically.

Step 2: Vary Sentence Length Aggressively

Break the “Flatline” rhythm by consciously varying your syntax. Use the 3-30-15 Rule:

- Start with a 3-word punchy sentence.

- Follow with a 30-word complex sentence using a semicolon or em-dash.

- Conclude with a 15-word standard sentence. This structural “heartbeat” lowers AI scores instantly.

Step 3: The “I/We” Authority Factor

Google’s 2026 guidelines prioritize Experience. AI cannot “experience” a product.

- Implementation: Add a “Personal Insight” box or a paragraph starting with: “In my 12 years of managing SEO campaigns, I’ve found that…” * Why it works: These phrases introduce autobiographical memory markers that AI rarely produces.

Industry-Specific Strategies

- Legal & Technical: Since you must use specific jargon like “Habeas Corpus,” surround the technical terms with highly “Bursty” commentary. Use a personal anecdote to offset the low perplexity of the jargon.

- Academic: Students often face unfair targets. To fight this, use Adversarial Stylometry. Intentionally choose the active voice where AI defaults to the passive. Instead of saying “It was discovered that…”, simply say “We found that…”

How to Handle Client Accusations

If a client accuses you of using AI, don’t get defensive. Use the Proof of Process (PoP) defense:

- Version History: Write in a cloud-based editor like Google Docs. Show the client the “Total Edit Time.” No one spends 6 hours on a “one-click” AI generation.

- The “Detector Bias” Test: Run a famous historical text (like the Declaration of Independence) through the detector. When it returns a 40% AI score, you prove the tool’s inherent bias.

- The “Draft” Trail: Share your messy outlines. AI doesn’t make “human mistakes” in drafts; it produces polished blocks from the start.

2026 AI Detector Comparison Table

Understanding the “Detection Threshold” of each tool helps you choose where to scan your work.

| AI Detector | Accuracy | False Positive Risk | Best Use Case |

| Originality.ai (v3.0) | 99% | 1.5% | SEO & Marketing Agency Work |

| Turnitin AI | 98% | <1.0% | Academic & Scientific Papers |

| GPTZero | 96% | 3.5% | General Internal Content |

| Copyleaks | 94% | 5.0% | Multi-language detection |

| ZeroGPT | 80% | 20%+ | AVOID: High False Positive Rate |

The Future of the False Positive Content Error

As we move further into 2026, the line between human and AI writing will continue to blur. Google has already hinted that “AI-assisted” content is acceptable as long as it provides Information Gain. The danger isn’t the AI score itself; it’s the lack of “Unique Insight.”

If your content provides a new perspective, a unique data point, or a first-hand account, Google’s algorithms will prioritize it even if a flawed third-party detector screams “AI.”

Quick FAQs:

A: It’s when a detector incorrectly flags human writing as AI. It happens because your writing is “too clean” or follows predictable, logical patterns.

A: Yes. Over-editing for “perfect” grammar removes the human quirks that detectors use to verify authenticity.

A: Vary your sentence lengths. Mix very short sentences with long, complex ones to create a “human” rhythm (Burstiness).

A: Originality.ai and Turnitin have the lowest false positive rates (under 2%). Avoid ZeroGPT for professional work.

A: Show your Google Docs Version History. It proves the content grew sentence-by-sentence over time rather than being pasted all at once.

Further Reading: Beyond the Detection Error

While the False Positive Content Error is often a result of being “too professional,” there is another side to the AI coin: AI Laziness. If you’ve ever noticed your AI assistant giving short, uninspired, or repetitive answers, you might be facing a different kind of productivity hurdle.

Check out our deep dive on: How to Combat AI Laziness and Get High-Quality Output

Tech Troubleshooting Expert and Lead Editor at TechCrashFix.com. With 7+ years of hands-on experience in software debugging and AI optimization, I specialize in fixing real-world tech glitches and streamlining AI workflows for maximum productivity.