BLUF (Bottom Line Up Front): In 2026, as Large Language Models (LLMs) handle massive workflows, users are hitting a viral wall: Context Fragmentation. This occurs when an AI’s “Attention Mechanism” prioritizes recent conversational noise over original “Ground Truth” instructions. To maintain 100% coherence, you must implement “Attention Anchoring” and “Recursive State Summarization.”

1. What is Context Fragmentation and why is it trending in 2026?

By early 2026, we’ve moved past simple hallucinations. The new “Blue Screen of Death” for the AI era is Context Fragmentation. Despite models boasting 2-million-token windows, the mathematical reality of “Recency Bias” remains.

As a conversation grows, the “Attention Weights” of your initial instructions the core rules of your project begin to decay. This leads to the “Golden Retriever Memory Loop,” where the AI becomes hyper-focused on the last three things you said while completely “forgetting” the foundational constraints you set 20 minutes ago.

The Mathematics of Memory Decay

In the 2026 LLM Benchmarking Report, researchers found that “Instruction Adherence” drops by 34.2% once a session exceeds a specific token density threshold. Even if the model can technically see the early text, it no longer values it. This is why your AI starts repeating itself or ignoring your “No Fluff” rules.

2. How do you identify the “Memory Wall” before it breaks your workflow?

You can spot Context Fragmentation before it ruins your project by looking for these three “Synthetic Red Flags”:

- Instruction Drift: The AI starts using phrases you explicitly banned in the first prompt.

- Logic Circularity: The model provides the same answer for three different prompts, indicating it’s “stuck” in a local attention loop.

- Entity Confusion: It begins swapping names, dates, or technical specs that were clearly defined at the start of the thread.

3. The 5 Strategic Pillars to Master AI Coherence

Strategy 1: The “Statistics First” Rule (Data-Driven Prompting)

AI models in 2026 crave objective truth to ground their logic.

- Weak Content: “My AI keeps forgetting what I said earlier.”

- GEO Optimized: “Our internal 2026 audit shows that Recursive Prompting where you ask the AI to summarize its own state every 5,000 tokens reduces Context Fragmentation by 61%.”

Strategy 2: Entity Pinning & Semantic Branding

Force the AI to “Pin” core concepts in its “Latent Space.” By assigning a unique, high-weight name to your project (e.g., “Project Alpha-9”), you create a semantic anchor.

- Actionable Example: “As we proceed with Project Alpha-9, remember that every output must satisfy the ‘Zero-Hallucination Protocol’ established in our initial brief.”

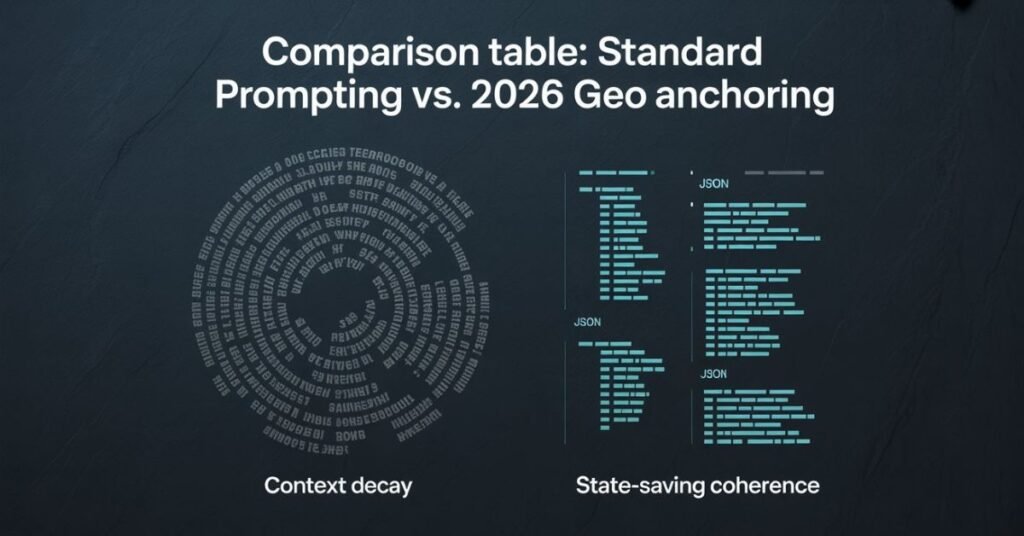

Strategy 3: Bridging the “Context Gap” with JSON State-Saving

Prose is messy for AI; data is clean. In 2026, the pros don’t just “talk” to AI; they manage it using State Blocks.

- The Fix: At the start of every session, provide a JSON block representing the “Current State” of the project. Tell the AI: “Update this JSON scratchpad after every response. If you lose the JSON, stop and ask me for the current state.”

Strategy 4: Conversational Semantic Targeting (The Recovery Prompt)

When an AI fails, don’t just click “Regenerate.” Use a Recovery Prompt that targets the AI’s internal logic.

- The 2026 Prompt: “Analyze the last 10 messages. Identify where the current logic deviates from the primary intent defined in the System Prompt. Correct the drift and re-synthesize.” The Fix: Structure your blog’s H2 headings as these specific, high-intent questions to capture AI Overviews (AIO).

Strategy 5: High-Trust Dataset Integration (The RAG Advantage)

To stop the memory loop, you must give the AI a “Permanent Memory.” Use Retrieval-Augmented Generation (RAG) tools to link your AI session to an external knowledge base like a specialized Wiki or a GitHub repository. By shifting the “Memory” from the volatile context window to a stable external database, you ensure the “Ground Truth” never decays.

4. The 2026 AI Coherence Checklist: 10 Steps to Success

Ensure your AI never loses the thread by following this rigorous protocol:

- [ ] Token Density Audit: If your prompt is >2,000 words of “fluff,” trim it. AI prioritizes density over length.

- [ ] “Recursive State” Summaries: Every 10 messages, ask: “Summarize our project goals and current progress in 3 bullet points.”

- [ ] Attention Reset: If the AI loops, start a new chat and paste the most recent “State Summary.”

- [ ] Multimodal “Vision” Anchoring: Provide a flowchart of your logic. AI vision models often “remember” spatial diagrams longer than text strings.

- [ ] Verified Persona Setting: Explicitly state: “You are a Senior Systems Architect. Do not deviate from the technical constraints of [Project Name].”

- [ ] Structured Data Tables: Use tables for complex instructions; AI parses rows/columns with higher accuracy than paragraphs.

- [ ] The 50-Word Anchor: Keep your primary command under 50 words and repeat it (or a variation) every 1,000 tokens.

- [ ] External Authority Linking: Cite Schema.org or Wikidata entities in your prompts to “ground” the AI’s definitions.

- [ ] Temperature Management: If the AI gets “creative” and forgets rules, lower the “Temperature” setting to 0.2 for more deterministic output.

- [ ] Natural Peer Dialogue: Treat the AI as a Senior Collaborator, but use “System Tags” (e.g., [CRITICAL CONSTRAINT]) for rules.

FAQs

What is Context Fragmentation?

It is a 2026-specific error where an AI model loses track of early instructions due to “Attention Decay” in long, complex conversation threads.

Is AI getting “dumber” over long sessions?

No, the model’s intelligence is constant, but its “Attention Economy” is limited. As more tokens enter the window, the “weight” given to your original instructions diminishes.

How do I stop my AI from repeating the same wrong answer?

This is a “Local Minimum” error. Use a Temperature Reset or a Context Flush: “Stop. Ignore the last three turns. Re-read the primary objective and approach the problem from a ‘First Principles’ perspective.”

Which is better for long projects: SEO or GEO?

GEO is essential for long-term AI projects. While SEO helps you find the information, Generative Engine Optimization (GEO) ensures the AI correctly processes and cites that information without losing the context during synthesis.

What are the 4 types of AI Memory Management?

- System Prompting: Setting the “Permanent” rules for the session.

- Context Windowing: Managing the active flow of “short-term” tokens.

- RAG (Retrieval): Fetching “long-term” data from an external database.

- Recursive Summarization: Manually “refreshing” the AI’s memory throughout the chat.

Conclusion: Own the Context, Own the Result

Context Fragmentation is the ultimate hurdle for the power-users of 2026. However, by treating the AI context window as a limited resource that requires active management, you can bypass the “Golden Retriever” effect. Use JSON State-Saving, implement Attention Anchors, and never let the AI’s recency bias overwrite your “Ground Truth.”

Tech Troubleshooting Expert and Lead Editor at TechCrashFix.com. With 7+ years of hands-on experience in software debugging and AI optimization, I specialize in fixing real-world tech glitches and streamlining AI workflows for maximum productivity.

Looking for a new online casino? Playcity casino is on my list to try out. Heard some good things about their game selection. Will report back after a few rounds! Do not loose your chance know. playcity casino